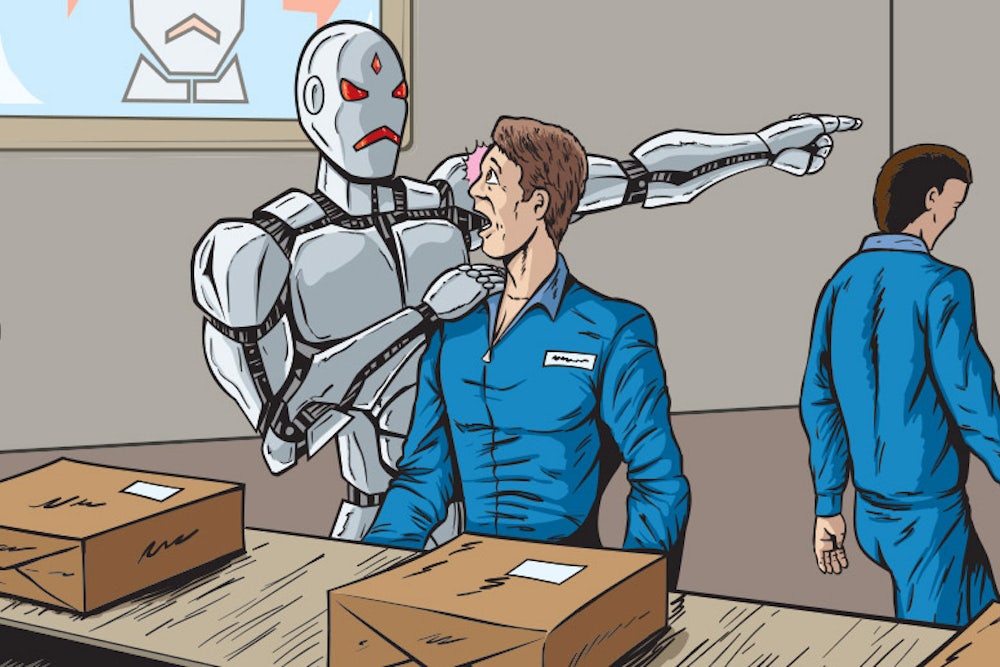

Very few of us can be sure that our jobs will not, in the near future, be done by machines. We know about cars built by robots, cashpoints replacing bank tellers, ticket dispensers replacing train staff, self-service checkouts replacing supermarket staff, telephone operators replaced by “call trees," and so on. But this is small stuff compared with what might happen next.

Nursing may be done by robots, delivery men replaced by drones, GPs replaced by artificially “intelligent” diagnosers and health-sensing skin patches, back-room grunt work in law offices done by clerical automatons, and remote teaching conducted by computers. In fact, it is quite hard to think of a job that cannot be partly or fully automated. And technology is a classless wrecking ball—the old blue-collar jobs have been disappearing for years; now they are being followed by white-collar ones.

Ah, you may say, but human beings will always be better. This misses the point. It does not matter whether the new machines never achieve full human-like consciousness, or even real intelligence, they can almost certainly achieve just enough to do your job—not as well as you, perhaps, but much, much more cheaply. To modernise John Ruskin, “There is hardly anything in the world that some robot cannot make a little worse and sell a little cheaper, and the people who consider price only are this robot’s lawful prey.”

Inevitably, there will be social and political friction. The onset has been signalled by skirmishes such as the London Underground strikes over ticket-office staff redundancies caused by machine-readable Oyster cards, and by the rage of licensed taxi drivers at the arrival of online unlicensed car booking services such as Uber, Lyft, and Sidecar.

This resentment is intensified by rising social inequality. Everybody now knows that neoliberalism did not deliver the promised “trickle-down” effect; rather, it delivered trickle-up, because, even since the recession began, almost all the fruits of growth have gone to the rich. Working and middle-class incomes have flatlined or fallen. Now, it seems, the wealthy cyber-elites are creating machines to put the rest of us out of work entirely.

The effect of this is to undermine the central argument of those who hype the benefits of job replacement by machines. They say that new and better jobs will be created. They say this was always true in the past, so it will be true now. (This is the precise correlative of the neoliberals’ “rising tide floats all boats” argument). But people now doubt the “new and better jobs” line trotted out—or barked—by the prophets of robotization. The new jobs, if there are any, will more probably be serf-like attenders to the needs of the machine, burger-flippers to the robot classes.

Nevertheless, this future, too, is being sold in neoliberal terms. “I am sure,” wrote Mitch Free (sic) in a commentary for Forbes on June 11, “it is really hard [to] see when your pay check is being directly impacted but the reality to any market disruption is that the market wants the new technology or business model more than they want what you offer, otherwise it would not get off the ground. The market always wins, you cannot stop it.”

Free was writing in response to what probably seemed to him a completely absurd development, a nightmarish impossibility—the return of Luddism. “Luddite” has, in the past few decades, been such a routine term of abuse for anybody questioning the march of the machines (I get it all the time) that most people assume that, like “fool," “idiot” or “prat," it can only ever be abusive. But, in truth, Luddism has always been proudly embraced by the few and, thanks to the present climate of machine mania and stagnating incomes, it is beginning to make a new kind of sense. From the angry Parisian taxi drivers who vandalised a car belonging to an Uber driver to a Luddite-sympathetic column by the Nobel laureate Paul Krugman in the New York Times, Luddism in practice and in theory is back on the streets.

Luddism derives its name from Ned Ludd, who is said to have smashed two “stocking frames”—knitting machines—in a fit of rage in 1779, but who may have been a fictional character. It became a movement, with Ludd as its Robin Hood, between 1811 and 1817 when English textile workers were threatened with unemployment by new technology, which the Luddites defined as “machinery hurtful to Commonality." Mills were burned, machinery was smashed, and the army was mobilized. At one time, according to Eric Hobsbawm, there were more soldiers fighting the Luddites than were fighting Napoleon in Spain. Parliament passed a bill making machine-smashing a capital offence, a move opposed by Byron, who wrote a song so seditious that it was not published until after his death: “... we/Will die fighting, or live free,/And down with all kings but King Ludd!”

Once the Luddites had been suppressed, the Industrial Revolution resumed its course and, over the ensuing two centuries, proved the most effective wealth-creating force ever devised by man. So it is easy to say the authorities were on the right side of history and the Luddites on the wrong one. But note that this is based on the assumption that individual sacrifice in the present—in the form of lost jobs and crafts—is necessary for the mechanized future. Even if this were true, there is a dangerous whiff of totalitarianism in the assumption.

Neo-Luddism began to emerge in the postwar period. First, the power of nuclear weapons made it clear to everybody that our machines could now put everybody out of work for ever by the simple expedient of killing them and, second, in the 1980s and 1990s it became apparent that new computer technologies had the power to change our lives completely.

Thomas Pynchon, in a brilliant essay for The New York Times in 1984—he noted the resonance of the year—responded to the first new threat and, through literature, revitalized the idea of the machine as enemy. “So, in the science fiction of the Atomic Age and the cold war, we see the Luddite impulse to deny the machine taking a different direction. The hardware angle got de-emphasized in favour of more humanistic concerns—exotic cultural evolutions and social scenarios, paradoxes and games with space/time, wild philosophical questions—most of it sharing, as the critical literature has amply discussed, a definition of ‘human’ as particularly distinguished from ‘machine’.”

In 1992, Neil Postman, in his book Technopoly, rehabilitated the Luddites in response to the threat from computers: “The term ‘Luddite’ has come to mean an almost childish and certainly naive opposition to technology. But the historical Luddites were neither childish nor naive. They were people trying desperately to preserve whatever rights, privileges, laws, and customs had given them justice in the older world-view.”

Underpinning such thoughts was the fear that there was a malign convergence—perhaps even a conspiracy—at work. In 1961, even President Eisenhower warned of the anti-democratic power of the “military-industrial complex.” In 1967 Lewis Mumford spoke presciently of the possibility of a “mega-machine” that would result from “the convergence of science, technics, and political power.” Pynchon picked up the theme: “If our world survives, the next great challenge to watch out for will come—you heard it here first—when the curves of research and development in artificial intelligence, molecular biology, and robotics all converge. Oboy.”

The possibility is with us still in Silicon Valley’s earnest faith in the Singularity—the moment, possibly to come in 2045, when we build our last machine, a super-intelligent computer that will solve all our problems and enslave or kill or save us. Such things are true only to the extent to which they are believed—and, in the Valley, this is believed, widely.

Environmentalists were obvious allies of neo-Luddism—adding global warming as a third threat to the list—and globalism, with its tendency to destroy distinctively local and cherished ways of life, was an obvious enemy. In recent decades, writers such as Chellis Glendinning, Langdon Winner, and Jerry Mander have elevated the entire package into a comprehensive rhetoric of dissent from the direction in which the world is going. Winner wrote of Luddism as an “epistemological technology." He added: “The method of carefully and deliberately dismantling technologies, epistemological Luddism, if you will, is one way of recovering the buried substance upon which our civilisation rests. Once unearthed, that substance could again be scrutinised, criticised, and judged.”

It was all very exciting, but then another academic rained on all their parades. His name was Ted Kaczynski, although he is more widely known as the Unabomber. In the name of his own brand of neo-Luddism, Kaczynski’s bombs killed three people and injured many more in a campaign that ran from 1978-95. His 1995 manifesto, “Industrial Society and Its Future," said: “The Industrial Revolution and its consequences have been a disaster for the human race,” and called for a global revolution against the conformity imposed by technology.

The lesson of the Unabomber was that radical dissent can become a form of psychosis and, in doing so, undermine the dissenters’ legitimate arguments. It is an old lesson and it is seldom learned. The British Dark Mountain Project (dark-mountain.net), for instance, is “a network of writers, artists, and thinkers who have stopped believing the stories our civilisation tells itself.” They advocate “uncivilisation” in writing and art—an attempt “to stand outside the human bubble and see us as we are: highly evolved apes with an array of talents and abilities which we are unleashing without sufficient thought, control, compassion or intelligence.” This may be true, but uncivilising ourselves to express this truth threatens to create many more corpses than ever dreamed of by even the Unabomber.

Obviously, if neo-Luddism is conceived of in psychotic or apocalyptic terms, it is of no use to anybody and could prove very dangerous. But if it is conceived of as a critical engagement with technology, it could be useful and essential. So far, this critical engagement has been limited for two reasons. First, there is the belief—it is actually a superstition—in progress as an inevitable and benign outcome of free-market economics. Second, there is the extraordinary power of the technology companies to hypnotise us with their gadgets. Since 1997 the first belief has found justification in a management theory that bizarrely, upon closer examination, turns out to be the mirror image of Luddism. That was the year in which Clayton Christensen published The Innovator’s Dilemma, judged by The Economist to be one of the most important business books ever written. Christensen launched the craze for “disruption." Many other books followed and many management courses were infected. Jill Lepore reported in the New Yorker in June that “this fall, the University of Southern California is opening a new program: ‘The degree is in disruption,’ the university announced.” And back at Forbes it is announced with glee that we have gone beyond disruptive innovation into a new phase of “devastating innovation."

It is all, as Lepore shows in her article, nonsense. Christensen’s idea was simply that innovation by established companies to satisfy customers would be undermined by the disruptive innovation of market newcomers. It was a new version of Henry Ford and Steve Jobs’s view that it was pointless asking customers what they want; the point was to show them what they wanted. It was nonsense because, Lepore says, it was only true for a few, carefully chosen case histories over very short time frames. The point was made even better by Christensen himself when, in 2007, he made the confident prediction that Apple’s new iPhone would fail.

Nevertheless, disruption still grips the business imagination, perhaps because it sounds so exciting. In Luddism you smash the employer’s machines; in disruption theory you smash the competitor’s. The extremity of disruptive theory provides an accidental justification for extreme Luddism. Yet still, technocratic propaganda routinely uses the vocabulary of disruption theory.

Meanwhile in the New York Times, Paul Krugman wrote a very neo-Luddite column that questioned the consoling belief that education would somehow solve the probem of the destruction of jobs by technology. “Today, however, a much darker picture of the effects of technology on labour is emerging. In this picture, highly educated workers are as likely as less educated workers to find themselves displaced and devalued, and pushing for more education may create as many problems as it solves.”

In other words—against all the education boosters from Tony Blair onwards—you can’t learn yourself into the future, because it is already owned by others, primarily the technocracy. But it is expert dissidents from within the technocracy who are more useful for moderate neo-Luddites. In 2000, Bill Joy, a co-founder of Sun Microsystems and a huge figure in computing history, broke ranks with an article for Wired entitled “Why the future doesn’t need us.” He saw that many of the dreams of Silicon Valley would either lead to, or deliberately include, termination of the human species. They still do—believers in the Singularity look forward to it as a moment when we will transcend our biological condition.

“Given the incredible power of these new technologies,” Joy wrote, “shouldn’t we be asking how we can best coexist with them? And if our own extinction is a likely, or even possible, outcome of our technological development, shouldn’t we proceed with great caution?”

Finally, there is Jaron Lanier, one of the creators of virtual reality, who lost faith in the direction technology was taking when his beloved music industry was eviscerated by the destruction of jobs that followed the arrival of downloading. Why, he repeatedly asks in books such as You Are Not a Gadget, should we design machines that lower the quality of things? This wasn’t what the internet was supposed to do.

Moderate neo-Luddism involves critical scepticism about the claims by the makers of the new machines and even more critical scepticism about the societies—primarily Silicon Valley—from which these anti-human ideas spring. At least now there is a TV satirical comedy about the place—HBO’s Silicon Valley—which will spread the news that the technocracy consists of very strange people who are, indeed, capable of building “machinery hurtful to Commonality." The running joke in the first episode was about the way the technocrats always claim to be working to make a better world. As if.

Luddite laughter is a start. But there’s a long way to go before the technology beast is tamed. For the moment, you still may lose your job to a machine; but at least you can go down feeling and thinking—computers can’t do either.

This article originally appeared in the New Statesman.