Elon Musk Sued Over Grok Creating Fake Porn of Teenagers

Multiple teens say the AI bot was used to remove their clothing in pictures without their consent.

A trio of Oklahoma teenagers are suing Elon Musk’s xAI, accusing the company’s artificial intelligence program of stealing images of them off the internet to create child pornography.

The lawsuit, filed Monday, accuses a single perpetrator of compiling images and videos of at least 18 girls, and digitally altering their appearances with tools such as Grok to create nude images of underage girls.

The suit cites a police arrest that occurred in December, documenting an instance in which the individual used Grok to strip a blue bikini from a photo that a girl posted to her Instagram account in order to “depict her without any clothes.”

It is the first instance in which underage victims of such an act have taken legal action against the offender, reported The Washington Post.

The mother of one of the teens told the Post that the violating incident had “crushed” her daughter, who previously was an outgoing student-athlete.

“It definitely put her into a little bit of a shell, which we had never seen before,” the mother told the newspaper.

The proliferation of AI-generated media has conjured new legal quandaries in recent months, particularly as some of the major chatbot programs—especially Grok—have made their image-generating capabilities more accessible to the general public.

A December review by the content analysis firm Copyleaks found that Grok had—at the time—been generating “roughly one nonconsensual sexualized image per minute,” each of which was directly posted to X for public consumption. Some of the images circulated by the chatbot included sexually explicit deepfakes of children, reported The 19th.

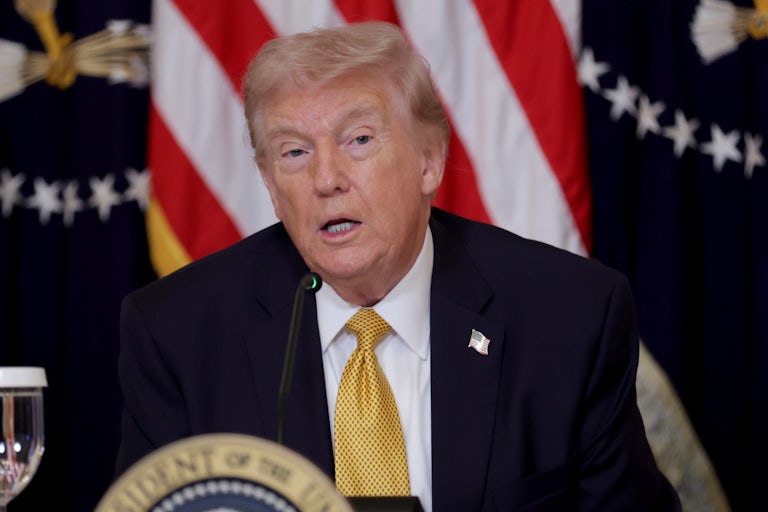

Musk, nonetheless, vehemently defended his AI creation, writing on X in January that he was “not aware of any naked underage images generated by Grok” at the time. “Literally zero,” he said.

There is little recourse available for those whose images and likeness have been stolen to fuel the nonconsensual AI recreations. The Senate passed the bipartisan DEFIANCE Act in January, in an attempt to create a pathway for civil action against those who produce, distribute, receive, or possess digitally generated porn that uses the face or likeness of an individual without their permission. That bill is still waiting for the House to take action on it, though it is unclear exactly when that could happen.

Tech experts argue that the production of deepfakes is doubling every six months, in part due to the widespread availability of AI. While much reporting has focused on the influence of deepfakes and artificially generated imagery on electoral integrity, coverage has practically glossed over the worst victims of the practice. The vast majority of deepfakes—some 90 percent—are nonconsensually generated porn of women, reported Context News in 2024.

“These young people—these children—are facing a lifetime of having these … sexualized images of what appears to be a child’s body out there on the internet,” the teens’ lawyer, Vanessa Baehr-Jones, told the Post. “It wouldn’t have been possible but for this tool that xAI released knowing full well that this material could be generated.”